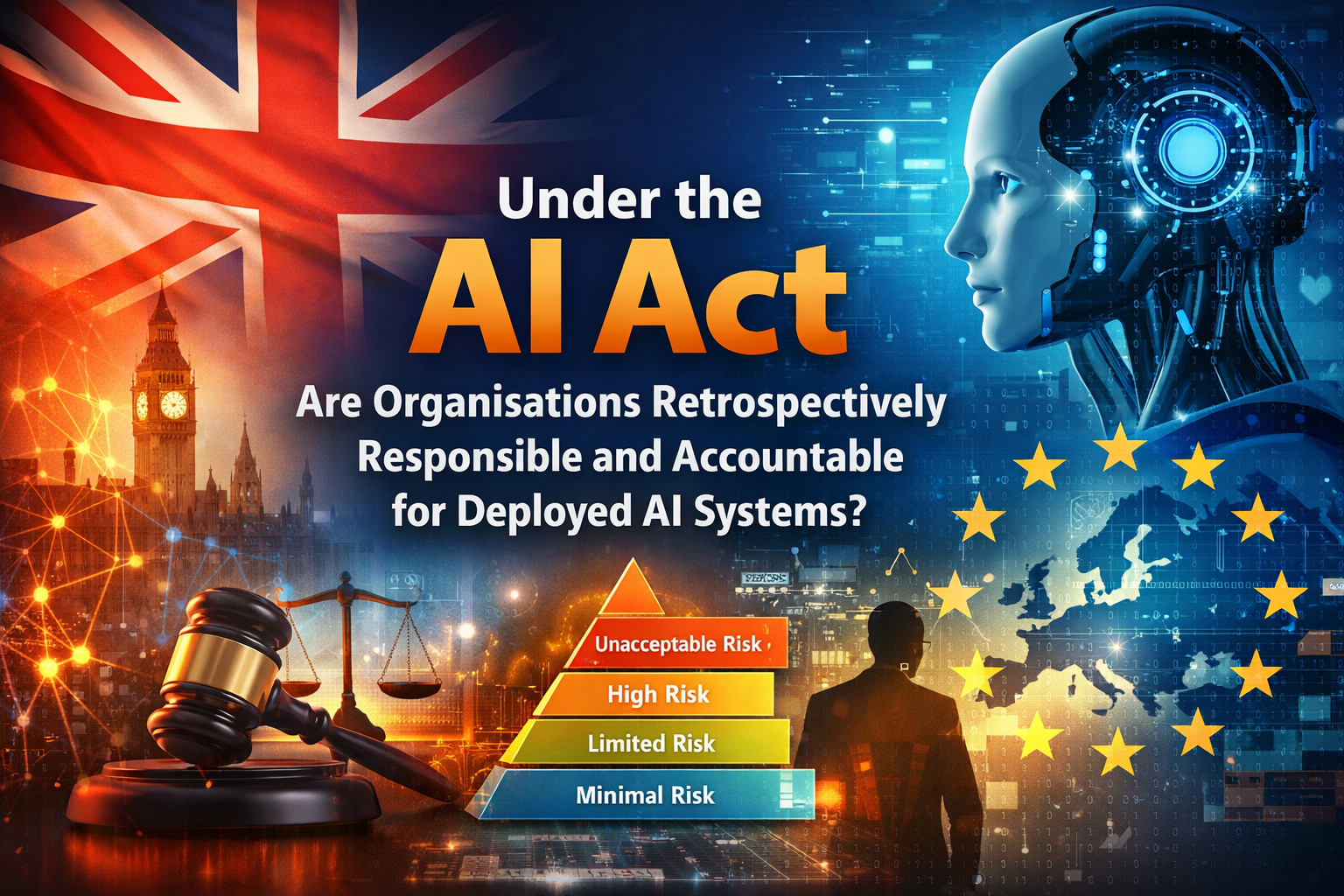

The introduction of the EU AI Act represents a fundamental shift in how artificial intelligence is governed, not just within the European Union, but globally.

Although the United Kingdom is no longer an EU Member State, it would be a serious miscalculation to assume that UK organisations are insulated from its effects. In reality, the Act will have material, direct, and unavoidable implications for UK companies—particularly those operating across borders or deploying AI in regulated sectors.

At present, there appears to be limited preparation across many organisations, with some relying on the UK’s non-EU status as a form of regulatory protection. This assumption is flawed. The AI Act is explicitly designed to extend beyond EU borders, and its impact will be felt wherever AI systems intersect with the European market or its citizens.

1. Extraterritorial Reach: Regulation Beyond Borders

The AI Act is not confined to organisations physically located within the EU. Its scope is deliberately broad and applies to:

- Companies placing AI systems on the EU market

- Organisations whose AI outputs are used within the EU

- Providers and deployers whose systems affect EU citizens

Implications for UK Organisations

Any UK-based organisation that:

- Sells AI-enabled products into the EU

- Provides AI-driven services to EU clients

- Uses AI systems that influence or interact with EU individuals

must comply with the Act, irrespective of its domestic regulatory environment.

This reflects a familiar pattern seen with GDPR—where regulatory influence extends through market access rather than geography. The result is clear: if your AI touches the EU, the AI Act applies.

2. Retrospective Responsibility: No Safe Harbour for Legacy AI

A critical and often misunderstood aspect of the AI Act is its treatment of existing AI systems.

Organisations are not granted a blanket exemption for systems deployed prior to the Act coming into force. Instead, responsibility is determined by ongoing use, risk profile, and system evolution.

(a) No “Grandfathering” Exemption

The Act does not provide a general exemption for legacy AI systems. Existing deployments are not automatically shielded from compliance obligations.

(b) Risk-Based Obligations Apply to Ongoing Use

The AI Act operates on a risk classification model (e.g. high-risk, limited risk).

If a legacy system:

- Continues to operate, and

- Falls within a regulated risk category

then compliance obligations apply, regardless of when it was originally deployed.

(c) “Substantial Modification” as a Trigger

Even where a system predates the Act, any significant modification post-enforcement will result in it being treated as a new system.

This triggers:

- Full compliance requirements

- Reclassification under the risk model

- Potential conformity assessments

This is particularly important in modern AI environments where systems are frequently updated, retrained, or extended.

(d) Transitional Periods and Phased Enforcement

The Act introduces phased implementation timelines, typically including:

- Early enforcement of prohibited practices (e.g. within ~6 months)

- Later application of high-risk obligations (typically 24–36 months)

This provides a window for organisations to act—but not to delay.

(e) Accountability Remains with Providers and Deployers

Responsibility under the Act is shared but persistent:

- Providers (developers/vendors)

- Deployers (organisations using the system)

In practice, most UK organisations using third-party AI will retain significant accountability, particularly in relation to:

- How the system is used

- Ensuring lawful and fair outcomes

- Maintaining human oversight and control

This is a crucial point: outsourcing AI does not outsource accountability.

3. Strategic Interpretation: An AI Transformation Imperative

From a business transformation perspective, the implications are profound.

Legacy AI systems cannot be treated as static assets or ignored from a compliance standpoint. Instead, they must be managed as part of a living AI portfolio.

Key Realisations

- Legacy systems are not a compliance safe haven

- AI estates are often fragmented, undocumented, and poorly governed

- Regulatory exposure is cumulative and systemic, not isolated

4. A Practical Enterprise Response

A robust organisational response should be structured, programme-led, and aligned with established governance disciplines.

Core Components of an Effective Approach

- Enterprise-wide AI system inventory A complete catalogue of all AI systems in operation

- Risk classification mapping Alignment of each system to the AI Act risk categories

- Remediation roadmap Prioritised based on regulatory exposure and business impact

- Governance integration Embedding AI oversight into existing frameworks such as: PRINCE2 MSP (Managing Successful Programmes)

This is not a compliance exercise in isolation—it is a full-scale operating model shift.

Conclusion

The EU AI Act fundamentally changes the question from:

“When was this AI system deployed?”

to:

“Is this AI system currently in use, and does it pose regulated risk?”

For UK organisations, the answer carries direct regulatory consequences.

Opinion

View: The absence of grandfathering provisions is both intentional and necessary.

Rationale: Allowing legacy AI systems to bypass regulation would introduce systemic risk, particularly in high-impact domains such as finance, employment, and public services.

However, the operational burden this creates should not be underestimated. Many organisations have fragmented and poorly understood AI estates, and the scale of remediation required is likely to be significantly greater than anticipate